Every creator conversation this quarter eventually circles back to one model: Wan 2.7. Developed by Alibaba's Tongyi Lab, this latest iteration has become the most requested review in the AI video generation space, not because of marketing hype, but because it addresses the most persistent frustration creators face: maintaining consistent characters, precise scene control, and production-quality output across multiple shots. After extensive testing across real-world workflows, this comprehensive review breaks down what Wan 2.7 Video actually delivers, where it excels, and how it compares to competing models in 2026.

What Is Wan 2.7 and Why Does It Matter?

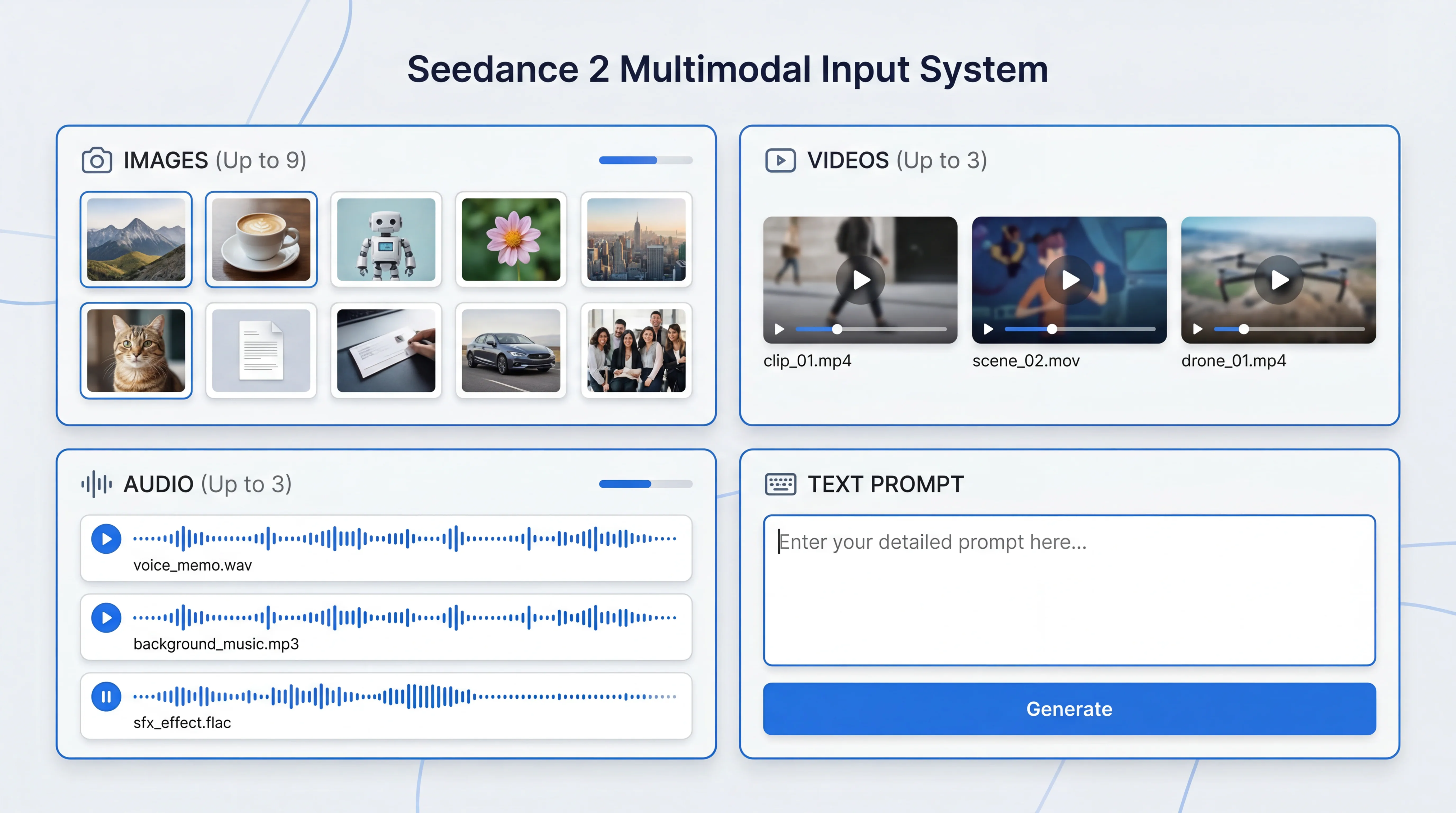

Wan 2.7 is a state-of-the-art AI video generation model built on a 27-billion-parameter Mixture-of-Experts (MoE) architecture. Unlike its predecessors, Wan 2.7 represents a fundamental shift from pure text to video generation toward a comprehensive video production toolkit with structured control mechanisms. The model generates cinematic 1080P HD videos from text descriptions, images, and even audio inputs, combining visual fidelity, audio synchronization, and motion consistency in a single unified framework.

What sets Wan 2.7 apart from earlier versions and competing models is not just parameter count or resolution. The real breakthrough lies in its multimodal injection capabilities and production-oriented feature set. Where Wan 2.6 required creators to type a text prompt and hope the AI understood their intention, often resulting in visual distortion and unpredictable outputs, Wan 2.7 allows direct injection of images, videos, and audio to decode movement and lighting with 1:1 accuracy. This translates the creator's exact intent into hyper-realistic cinematic visuals with unprecedented precision.

The model's commercial viability is equally significant. Wan 2.7 operates on a credits-based pricing model with no monthly subscription fees, no platform lock-in, and credits that never expire. For agencies producing 50 ad variants per week, this means automating raw generation at under $50 in credits while reallocating human time to strategy and final review, a meaningful shift in production economics.

Core Features That Redefine AI Video Generation

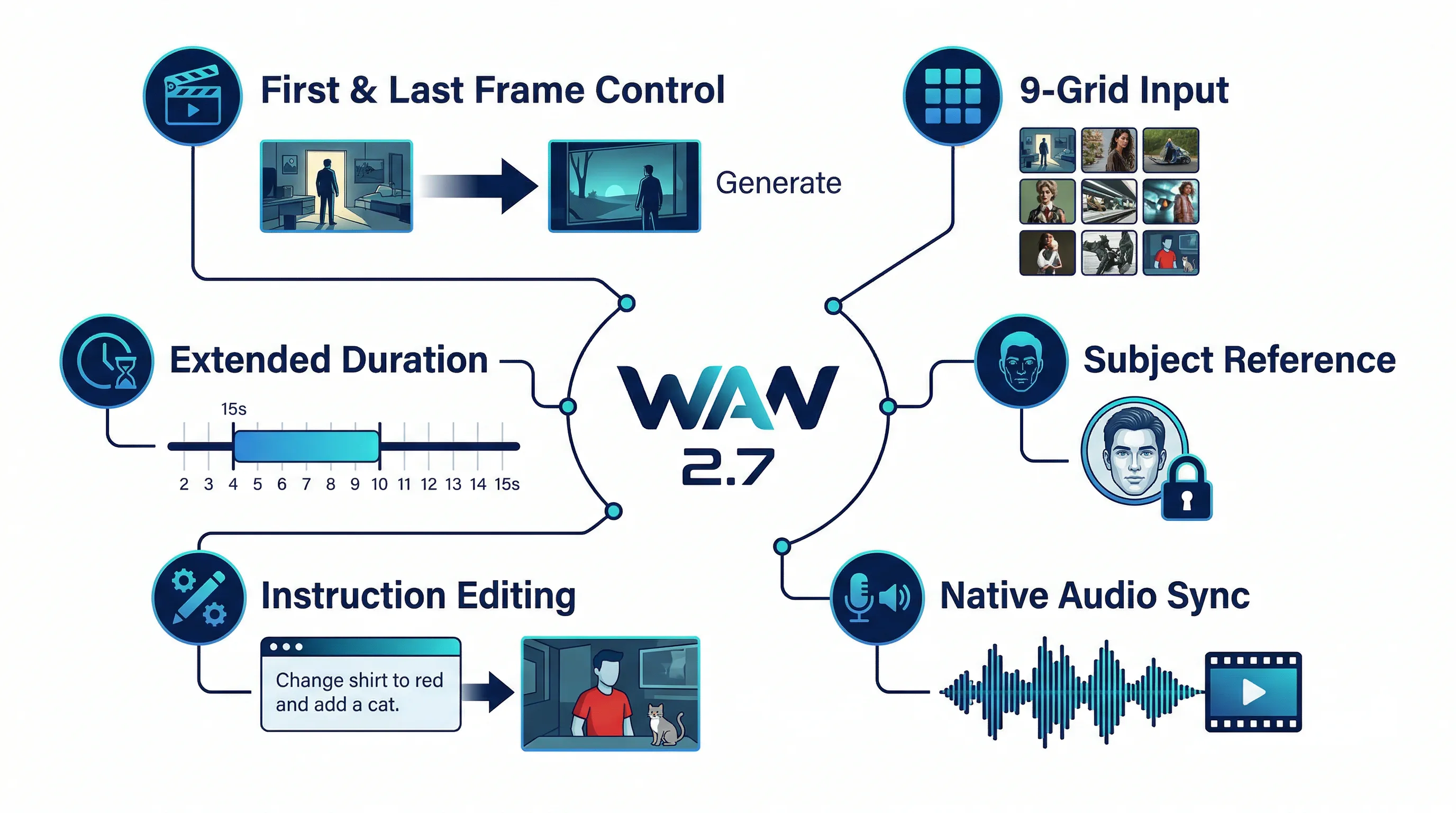

First-and-Last-Frame Control: Precision Storytelling

The most talked-about feature in Wan 2.7 is first-and-last-frame generation, a capability that fundamentally changes how creators approach AI video production. Instead of generating a clip and hoping it lands where you need it, you provide two reference images: what the video should look like at the start and what it should look like at the end. The model then generates the motion and transition between these two anchors, giving you predictable, controllable narrative progression.

This solves one of the most persistent frustrations with AI video: unpredictable scene evolution. In Wan 2.1, this capability existed as a separate model checkpoint (Wan2.1-FLF2V-14B), requiring creators to switch between different versions. In Wan 2.7, first-and-last-frame control is integrated directly into the main model, eliminating workflow friction and enabling seamless multi-shot production. If your workflow starts from approved stills rather than pure prompts, this also makes Wan 2.7 a more natural fit for image to video pipelines.

The practical applications are immediate. For filmmakers storyboarding a sequence, you can define the exact composition at scene entry and exit points. For marketers creating product demos, you control the exact product positioning at key moments. For educators producing tutorial content, you ensure visual continuity between instructional segments. This level of control moves AI video generation from experimental tool to production-ready asset.

9-Grid Image-to-Video: Structured Visual Storytelling

Wan 2.7 introduces a revolutionary 9-grid (3x3) image input system for video generation. Instead of feeding a single reference image, creators provide a board of nine images that define scene composition, character angles, lighting conditions, and visual context across different moments or perspectives. The model then synthesizes these inputs into a cohesive animated sequence that maintains structural consistency throughout.

This feature bridges the gap between static storyboard planning and animated output in a way no previous Wan model could achieve. Compared to traditional autoregressive AI video methods, the 9-grid approach delivers superior consistency, faster inference speed, and better structural coherence. For production teams working from detailed storyboards, this transforms planning assets directly into motion without the usual trial-and-error iteration cycle.

The 9-grid system particularly excels in scenarios requiring complex camera movements or character interactions. By providing multiple reference angles, creators can guide the model's understanding of spatial relationships, depth, and movement trajectories, resulting in outputs that feel intentionally choreographed rather than randomly generated.

Subject and Voice Reference: Identity Consistency Across Shots

Maintaining character consistency has been notoriously difficult in AI video generation, where identities easily collapsed or drifted between frames. Wan 2.7 introduces what the documentation calls "Absolute Identity Lock", a subject referencing system that perfectly locks facial features (eye level, chin lines), clothing details, and environmental styles across complex camera movements.

You provide a reference image of a person, object, or character, and the model maintains that visual identity throughout generated videos. This addresses the top complaint about Wan 2.5 and 2.6: characters who did not look the same from frame to frame. The improvement is immediately noticeable in testing, with significantly fewer mid-clip wardrobe changes, facial morphing, or identity drift.

Voice reference extends this consistency to audio. Creators can provide a voice sample, and Wan 2.7 will maintain that vocal identity across generated audio tracks, critical for branded content, character-driven narratives, or any project requiring recognizable voice consistency. This puts Wan 2.7 in direct competition with tools like Kling and Hailuo, which have offered identity-consistent generation for some time, but with Wan 2.7's broader feature integration.

Native Audio Synchronization: Sound That Matches Motion

Unlike most AI video models that generate silent clips requiring manual audio layering, Wan 2.7 includes native audio generation synchronized directly to visual content. Background music, ambient sound, and character vocals are generated in sync with scene motion from the start, not added afterward. For anyone who has manually matched audio to AI video frame by frame, this represents the most immediately practical upgrade in the entire feature set.

The model supports optional audio input, allowing creators to provide reference audio that influences both the visual generation and the synchronized output. This multimodal approach means you can feed in a music track and have the video's pacing, cuts, and motion dynamics respond to the audio's rhythm and emotional tone, creating a level of audio-visual coherence that was previously only achievable through extensive post-production work.

Instruction-Based Video Editing: Text-Driven Modifications

One of Wan 2.7's most innovative features is instruction-based video editing, the ability to modify existing videos through simple text commands without regenerating from scratch. Instead of starting over when you need adjustments, you can type commands like "replace the background," "shift the lighting to golden hour," or "recolor the outfit to blue" and have the model apply those edits while preserving all other elements.

This video-to-video editing capability reduces input data complexity and computational load, delivering faster results than full regeneration. For iterative creative workflows, this is transformative. You can refine outputs through targeted adjustments rather than rolling the dice on entirely new generations. The feature is particularly valuable for agencies applying seasonal treatments to existing footage or brand teams maintaining product visual consistency across multiple scenes without reshooting.

Extended Duration and Continue Filming: Breaking the Fragment Limit

One of the biggest constraints of first-generation video models was narrative length, typically limited to single-shot segments of 3 to 4 seconds. Wan 2.7 addresses this through its "continue filming" feature, an intelligent extension system that allows infinite expansion of existing video materials. The model supports output durations from 2 to 15 seconds in a single generation, making it the only option among major competitors for clips longer than 10 seconds.

This extended duration capability, combined with first-and-last-frame control, enables true multi-shot narrative construction. Creators can chain sequences together with predictable transitions, building longer-form content that maintains visual and stylistic consistency throughout, a critical requirement for professional content workflows.

Technical Specifications and Output Quality

Wan 2.7 generates video at native 1080P HD resolution, with 720P also available for faster processing. The model outputs at 24fps, matching cinema-standard frame rates for professional-quality motion. Generation times vary based on duration and resolution, but the model's architecture prioritizes quality over speed, a deliberate trade-off that positions it as a production tool rather than a rapid prototyping system.

The model's visual quality represents a significant upgrade over Wan 2.6 across multiple dimensions. Style consistency is notably stronger. The model holds cinematic realism, anime aesthetics, and illustrated styles more reliably across frames, which is essential for content requiring recognizable visual identity throughout. Temporal consistency has improved dramatically, with fewer flickering faces, fewer mid-clip wardrobe changes, and fewer instances where subjects morph slightly between cuts.

Motion dynamics show particular refinement. Wing motion in a flying eagle test showed clean depth-of-field rendering and natural movement without the jittery artifacts common in earlier models. Landscape pans demonstrate smooth cloud movement and atmospheric depth, with the model handling negative prompts effectively to suppress artifacts like blur, distortion, and watermarks.

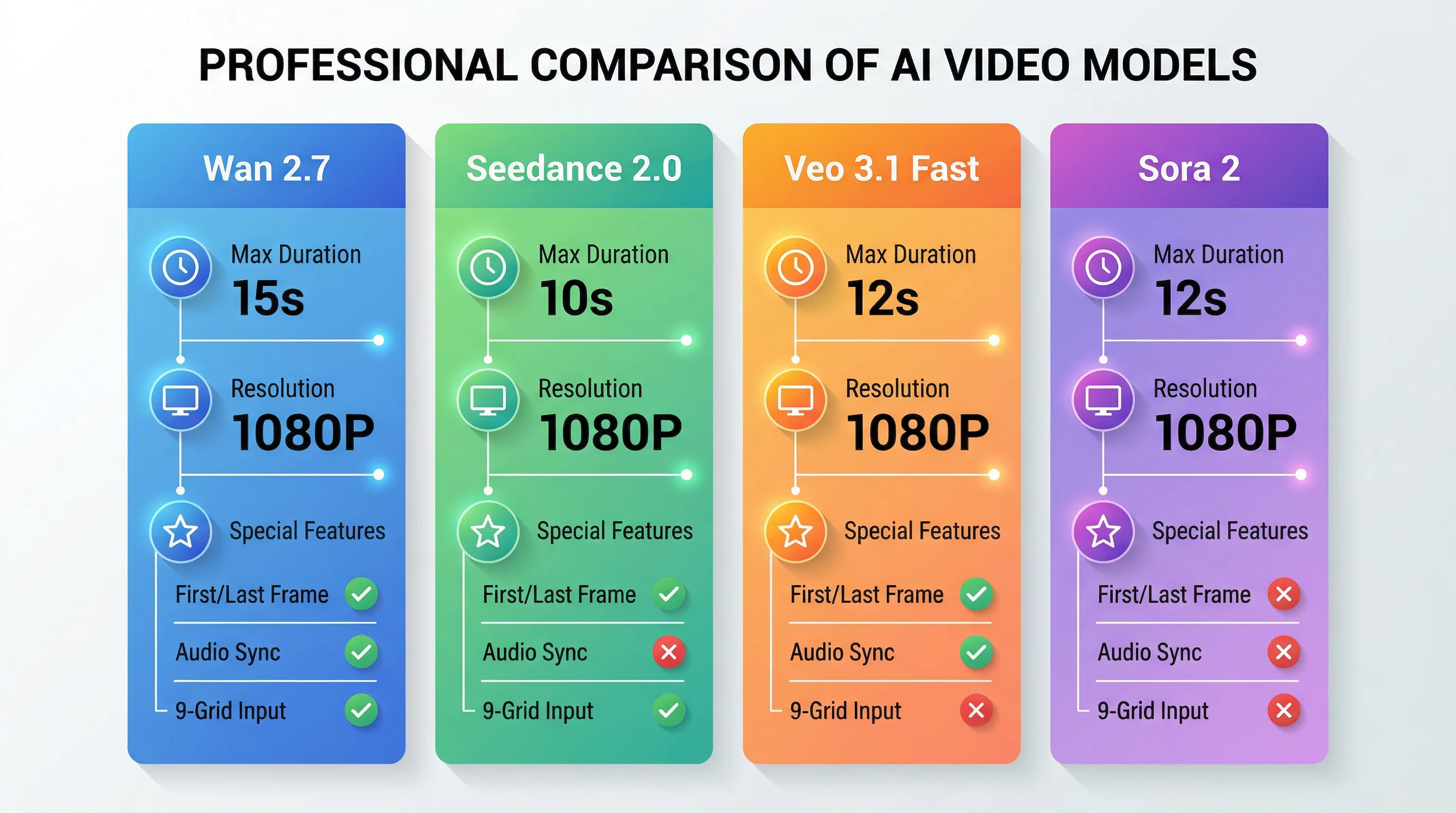

How Wan 2.7 Compares to Competing Models

Wan 2.7 vs Seedance 2.0: Features vs Value

ByteDance's Seedance 2.0 delivers the best value for high-quality motion at the lowest cost per second in the current market. However, Wan 2.7 is the Swiss Army knife of the comparison, offering the most features, most flexibility, and the best fit for workflows requiring audio input synchronization or frame-to-frame control.

Seedance 2.0 maxes out at 10-second clips and lacks first-and-last-frame control or audio input features. It interprets prompts with slightly more creative license, which can be either beneficial or frustrating depending on project requirements. Seedance is generally faster for API-based generation, making it ideal for rapid iteration and testing. The smart approach many production teams adopt is using Seedance 2.0 for iteration and testing, then generating final output with Wan 2.7 when unique creative controls are needed. If you want the deeper production tradeoffs, read the dedicated Seedance 2 review and the older Seedance 2 vs Wan 2.6 comparison.

Wan 2.7 vs Veo 3.1: Control vs Physics

Google's Veo 3.1 Fast leads on physics realism and is the only model in the comparison offering both auto-generated audio and 12-second clips at $0.10 per second. Veo 3.1 produces cinema-quality output at 24fps with native audio generation including ambient sounds, dialogue, and music all synchronized to visuals. However, Veo 3.1 Lite maxes out at 8 seconds and lacks the granular control features that define Wan 2.7.

Where Wan 2.7 excels is in giving creators precise control over scene construction through first-and-last-frame anchors, 9-grid composition, and instruction-based editing. Veo 3.1 prioritizes realistic physics simulation and natural motion, making it ideal for scenarios where realism trumps control. The choice between them depends on whether your workflow values directability (Wan 2.7) or naturalistic physics (Veo 3.1). For the full breakdown, the standalone Veo 3.1 review covers the physics and audio side in more detail.

Wan 2.7 vs Sora 2: Production Tool vs Experimental Platform

OpenAI's Sora 2 leads on physics realism and offers 12-second clips with auto-generated audio. However, Sora remains more experimental in positioning, with less emphasis on production workflow integration. Wan 2.7's feature set, particularly first-and-last-frame control, 9-grid input, and instruction-based editing, is explicitly designed to match how professional content workflows actually operate: you have a storyboard, you have a character, and you have defined start and end points for each scene. Wan 2.7 works with that structure rather than against it. If you are comparing frontier models across the whole category, the broader AI video model comparison is a useful companion.

Model Comparison Table

| Feature | Wan 2.7 | Seedance 2.0 | Veo 3.1 Fast | Sora 2 |

|---|---|---|---|---|

| Max Duration | 15 seconds | 10 seconds | 12 seconds | 12 seconds |

| Max Resolution | 1080P | 1080P | 1080P | 1080P |

| First/Last Frame Control | ✓ | ✗ | ✗ | ✗ |

| Audio Input Sync | ✓ | ✗ | ✗ | ✗ |

| Native Audio Generation | ✓ | ✗ | ✓ | ✓ |

| 9-Grid Image Input | ✓ | ✗ | ✗ | ✗ |

| Instruction-Based Editing | ✓ | ✗ | ✗ | ✗ |

| Subject Reference | ✓ | ✓ | ✓ | ✓ |

| Negative Prompts | ✓ | Limited | ✗ | ✗ |

| Pricing Model | Credits (no expiry) | Per-generation | Per-generation | Subscription |

| Commercial Use | All tiers | All tiers | All tiers | Varies |

Real-World Use Cases and Workflow Integration

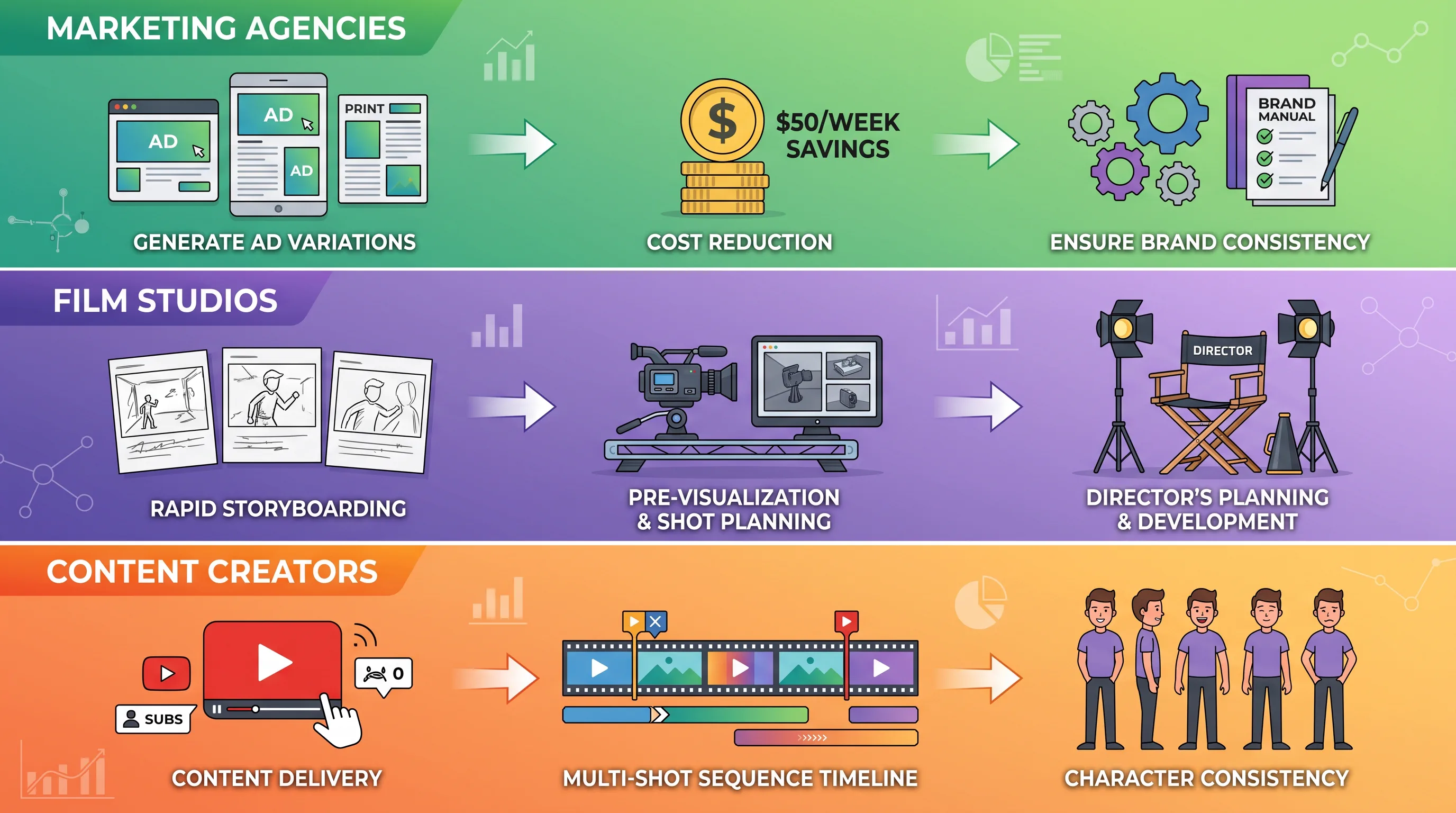

Marketing Agencies: Programmatic Ad Variant Generation

An agency producing 50 ad variants per week can automate the raw generation step at under $50 in credits, leaving human time for strategy and final review. This represents a meaningful reallocation of budget from manual production to creative strategy. The no-subscription model means there is no monthly seat fee, no platform lock-in, and no usage minimum. You pay per generation, which aligns perfectly with performance marketing workflows. Teams building repeatable ad systems will usually pair that with a clear Seedance 2 pricing reference or a benchmark article for faster iteration models.

Brand teams can use subject referencing to keep product visuals consistent across multiple generated scenes. The video-to-video editing feature allows them to take existing footage and apply new styles or seasonal treatments without reshooting, critical for maintaining brand consistency while adapting to campaign cycles.

Film Studios: Pre-Visualization and Rapid Prototyping

AI pre-visualization is a growing search topic in the film production space, and Wan 2.7's feature set directly addresses this workflow. Directors can use 9-grid storyboards to visualize complex sequences before committing to expensive shoots. First-and-last-frame control enables precise scene planning, while subject reference maintains character consistency across pre-viz sequences.

The model's ability to generate up to 15-second clips with native audio makes it viable for full scene pre-visualization rather than just single-shot tests. Production teams can iterate on blocking, camera movement, and pacing with actual motion and sound before stepping onto set, reducing costly on-set experimentation.

Content Creators: Multi-Shot Narrative Construction

For YouTubers, educators, and narrative content creators, Wan 2.7's continue filming feature and extended duration support enable true multi-shot storytelling. The combination of first-and-last-frame control with subject reference means you can maintain character consistency across an entire video sequence, solving the problem that has plagued AI video since its inception.

The instruction-based editing capability is particularly valuable for iterative content refinement. Instead of regenerating entire clips when you need adjustments, you can apply targeted modifications through text commands, dramatically reducing iteration time and computational costs.

Technical Integration: API Access and Platform Support

Wan 2.7 is available through multiple API providers, including Together AI, Segmind, and direct access through Alibaba's Model Studio. The Together AI implementation uses the endpoint Wan-AI/Wan2.7-T2V and supports standard REST API calls with JSON payloads. Authentication uses API key headers, and the model runs on Together AI's serverless infrastructure for scalable deployment.

Platform integrations are expanding rapidly. Wan 2.7 is live on Picsart, SeaArt AI, EaseMate AI, WaveSpeedAI, and MindStudio, among others. SeaArt AI's model library allows creators to access multiple AI video models without switching platforms, enabling direct side-by-side comparisons between Wan 2.7, Seedance, Veo, and other models within a single workflow.

MindStudio's integration is particularly notable for workflow automation. The platform supports building AI agents that can watch for new product images in a Google Drive folder, generate videos using Wan 2.7, apply post-processing (upscaling, face swap, clip merging, subtitle generation), and deliver finished clips to Slack, all without manual intervention. This level of workflow integration transforms Wan 2.7 from a generation tool into a production pipeline component.

Pricing and Commercial Licensing

Wan 2.7 operates on a credits-based pricing model with no monthly subscription fees. Credits do not expire, a significant advantage over most AI video tools that reset unused capacity at the end of each billing cycle. Commercial use is included on all paid tiers, with relatively permissive licensing terms consistent with the Wan series' historical approach.

Specific pricing varies by platform provider, but the general structure favors production workflows with variable usage patterns. For technical buyers evaluating official documentation, callable APIs, and documented price tables, Alibaba's publicly documented video offerings provide stronger runtime-contract clarity than many competitors.

Parameter Configuration and Prompt Engineering

| Parameter | Range | Recommended | Purpose |

|---|---|---|---|

| Duration | 2-15 seconds | 5-8 seconds | Balance quality and generation time |

| Resolution | 720P, 1080P | 1080P for final, 720P for testing | Output quality vs speed |

| Aspect Ratio | 16:9, 4:3, 9:16 | 16:9 for web, 9:16 for mobile | Platform optimization |

| Negative Prompt | Text string | blurry, distorted, watermark | Artifact suppression |

| Prompt Expansion | Boolean | Enabled | Enhanced detail interpretation |

| Audio Input | Optional file | Use for rhythm sync | Audio-visual coherence |

| Frame Anchors | 0-2 images | Use for scene control | Predictable transitions |

Wan 2.7 responds well to detailed descriptive prompts with specifics about setting, lighting, camera movement, and action. The model benefits from structured prompt engineering that separates scene description, character details, motion direction, and stylistic preferences into distinct clauses. Negative prompts reliably suppress common artifacts, though they will not rescue fundamentally poor input images. If you want a stronger prompt structure for production work, the Seedance 2 prompt guide is still worth borrowing from because the camera-language and scene-blocking principles transfer well.

Limitations and Considerations

Despite its comprehensive feature set, Wan 2.7 has limitations worth noting. The model prioritizes quality over speed, resulting in longer generation times compared to competitors like Seedance 2.0 or Veo 3.1 Lite. For rapid prototyping workflows requiring dozens of quick iterations, this can be a bottleneck. The recommended approach is using faster models for initial testing and reserving Wan 2.7 for final production generations where its unique features add value.

While temporal consistency has improved dramatically over Wan 2.6, it is not perfect. Complex scenes with multiple moving elements or rapid camera movements can still exhibit minor flickering or consistency issues, though these are significantly reduced compared to earlier versions. The model performs best with clear, well-structured prompts and high-quality input images. Garbage in, garbage out still applies.

The 9-grid feature, while innovative, requires careful preparation of input images to achieve optimal results. Poorly composed or inconsistent grid inputs can confuse the model's spatial understanding, leading to suboptimal outputs. This feature works best when integrated into structured storyboarding workflows rather than ad-hoc experimentation.

Why Seedance AI Offers the Best Access to Wan 2.7

For creators and production teams looking to leverage Wan 2.7's capabilities, Seedance AI provides the most comprehensive access point. The platform integrates multiple cutting-edge video and image generation models in a single interface, eliminating the need to maintain separate accounts and workflows across different providers. This unified approach dramatically reduces friction in multi-model workflows, a critical advantage when you need to compare outputs or use different models for different stages of production. If you want the direct product overview first, you can also jump straight to the Wan 2.7 Video model page.

Seedance AI offers an exceptionally convenient, one-stop AI creation experience. The platform supports not only Wan 2.7 but also other frontier models like Seedance's own video generators, Kling, Veo, and leading image generation systems. This breadth means you can test Wan 2.7's first-and-last-frame control against Seedance 2.0's speed, or compare subject reference consistency across multiple models, all within the same project workspace. For agencies and studios managing diverse content requirements, this flexibility is invaluable.

The platform's interface is designed for production workflows, with features like batch processing, project organization, and integrated post-processing tools. You can generate with Wan 2.7, apply upscaling or editing, and export final assets without leaving the platform. For teams evaluating whether Wan 2.7's unique features justify integration into their pipeline, Seedance AI provides the lowest-friction testing environment available.

Conclusion: Production-Ready AI Video Has Arrived

Wan 2.7 delivers on what the text-to-video AI category has been promising for two years: production-adjacent quality through accessible APIs at pricing that makes programmatic video generation actually viable for commercial workflows. The model's comprehensive feature set, first-and-last-frame control, 9-grid composition, subject and voice reference, native audio synchronization, and instruction-based editing, represents a fundamental shift from experimental generation tools to production pipeline components.

For filmmakers, YouTubers, marketers, and agency creators who need multi-shot videos with audio and precise control, Wan 2.7's feature set matches how professional content workflows actually operate. You have a storyboard, you have a character, and you have defined start and end points for each scene. Wan 2.7 works with that structure rather than against it. The model is not perfect, and it is not the fastest option available, but it is the most feature-rich and controllable AI video model in the 2026 landscape.

The real question is not whether Wan 2.7 is overhyped. It is whether your specific workflow benefits from its unique control features. If you are producing content that requires character consistency across shots, precise scene transitions, audio-visual synchronization, or iterative editing without full regeneration, Wan 2.7 is the strongest option currently available. For rapid prototyping or scenarios where speed trumps control, alternatives like Seedance 2.0 or Veo 3.1 Lite may be more appropriate. The smart approach is adding Wan 2.7 to your toolkit for scenarios where its capabilities provide clear value, while maintaining access to faster models for iteration.

AI video generation has moved from experimental novelty to production tool. Wan 2.7 represents the current state of the art in controllable, feature-rich video generation, and platforms like Seedance AI make accessing and integrating these capabilities into real workflows more practical than ever before.