AI video is moving fast, and Veo 3.1 already hints at where Google may go next. Better image-to-video quality, native audio, and stronger camera control have made Veo 4 one of the most closely watched upcoming model releases.

Google has not officially announced Veo 4 at the time of writing, but the broader direction is already visible. Based on current Veo capabilities, competitive movement across the market, and the real-world pain points creators still face, this guide explores what Veo 4 might deliver and why it matters for creators, marketers, and developers building the next generation of video content.

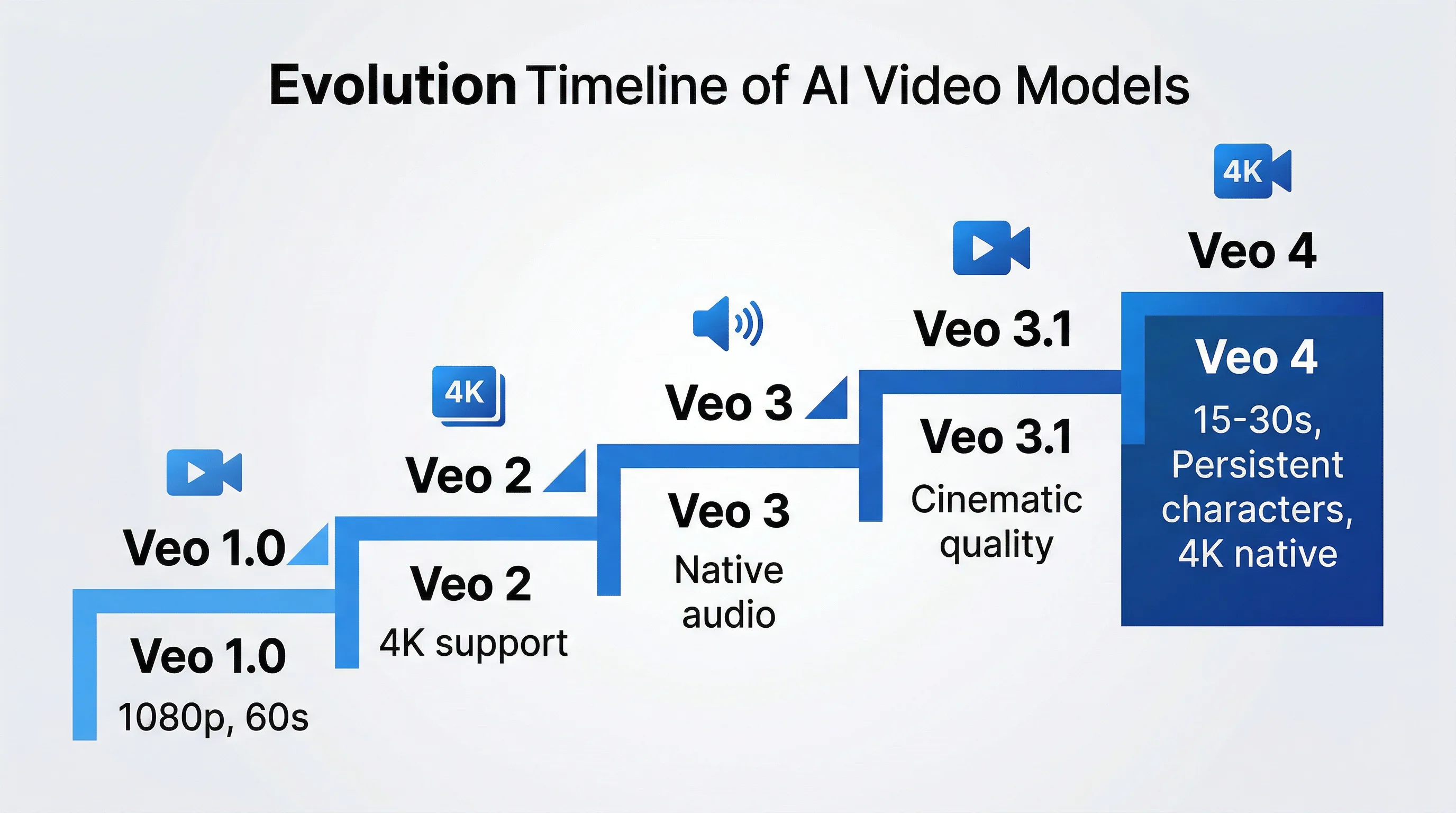

Understanding the Veo Lineage: From Veo 1.0 to Veo 3.1

To understand what Veo 4 could represent, it helps to look at the pattern Google has already established. Veo 1.0, announced at Google I/O 2024, marked Google's first serious push into text-to-video generation, with a focus on cinematic motion and longer-form output than most early rivals could manage.

The iteration speed accelerated from there. Veo 2, released in late 2024, pushed toward higher fidelity and stronger real-world physics. Veo 3 added native audio generation, bringing synchronized dialogue, sound effects, and ambient sound into the same generation workflow. Veo 3.1 then tightened image-to-video quality, improved temporal stability, and pushed the model closer to production-ready output.

Veo 3.1, the current flagship, delivers consistent 1080p output, supports native 4K workflows, and produces camera motion that feels more cinematic than the average AI video generator. It uses a Diffusion Transformer approach across spatio-temporal patches, meaning video is modeled as a continuous sequence rather than a stack of disconnected still images. That architectural choice is a large part of why motion fidelity and physical consistency feel stronger than many competing systems.

Real-world testing supports that view. Veo 3.1 routinely produces some of the cleanest single-shot outputs in the category, with fewer compression artifacts, stronger prompt adherence around camera movement, and more stable motion across its full generation window. You can already experiment with that workflow through Seedance AI's Veo 3.1 experience, which gives creators a practical way to evaluate how Google's current model behaves before a future release arrives.

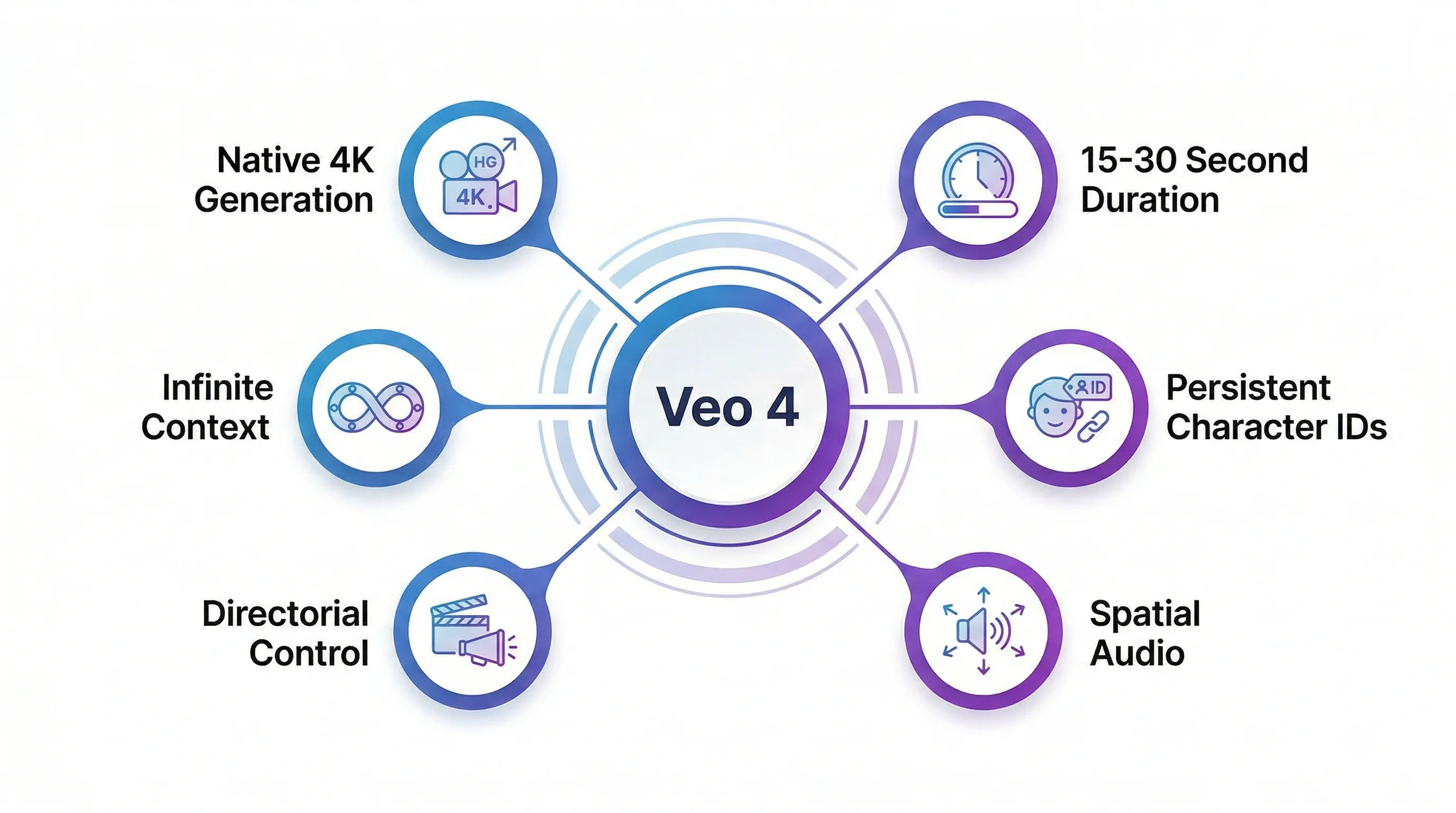

What Veo 4 Is Expected to Deliver

Based on current model limitations, competitive pressure, and Google's own product trajectory, Veo 4 is likely to focus on the remaining blockers that still keep AI video from feeling fully native to professional production.

Extended Duration with Temporal Consistency

Veo 3.1 still tops out at short clips. That makes it useful for cinematic inserts, ads, social content, and fast experimentation, but it forces narrative creators into editing-heavy workflows when they need longer scenes. Veo 4 is expected to push single-pass generation toward the 15 to 30 second range while preserving continuity across the entire sequence.

Temporal consistency remains one of the hardest problems in AI video. Earlier models frequently forgot props mid-shot, drifted in costume details, or shifted lighting in ways that broke immersion. A next-generation Veo model will likely aim to preserve scene memory far more reliably, making it possible to hold object identity, environmental logic, and character appearance over longer durations.

Native 4K Generation and Micro-Detail Fidelity

While Veo 3.1 already competes well in high-resolution workflows, much of the market still depends on upscaling. True native 4K matters because it determines whether footage survives close inspection on large displays, premium ad placements, or cinematic delivery pipelines.

If Veo 4 pushes deeper into native 4K generation, the real gain will not just be pixel count. It will be micro-detail fidelity: skin texture, water droplets, reflections, environmental particles, and subtle lighting effects that look generated with intent rather than interpolated from a softer source.

Persistent Character Identity and Avatar Systems

Character consistency remains one of the biggest workflow bottlenecks in AI video. Most current models can keep a subject stable inside one short clip, but they struggle when the same character has to appear across multiple scenes with the same face, hair, voice, and body language.

Veo 4 could address this with some form of persistent character memory, identity tokens, or avatar slots. If creators can define a reusable on-screen character once and deploy that identity across multiple prompts and scenes, AI video moves much closer to serialized storytelling, branded spokespeople, and reusable campaign assets.

Advanced Camera Control and Directorial Precision

Veo 3.1 already responds well to prompts like "tracking shot," "dolly in," or "golden hour backlight." Veo 4 is expected to make that control more granular, potentially moving from prompt-driven camera guidance toward shot-level directing.

That could mean more reliable focal changes, stronger control over shot progression, cleaner lens-language interpretation, and eventually selective editing where only a segment of a shot gets regenerated instead of the whole clip. For creators used to traditional production tools, that shift would make AI video feel less like prompt gambling and more like directing.

Spatial Intelligence Audio

Native synchronized audio was one of the biggest Veo 3 breakthroughs. Veo 4 could take that further by improving spatial acoustics so environments sound physically correct, not just contextually matched.

That means dialogue that behaves differently in a hallway versus a warehouse, footsteps that change with floor material, and ambient sound that evolves naturally as the camera moves through space. If Google gets this right, one of the clearest remaining tells of AI-generated content starts to disappear.

How Veo 4 Compares to the Competition

Veo 4 does not exist in a vacuum. Any future Google release will have to compete against the models that already define the top tier of AI video today.

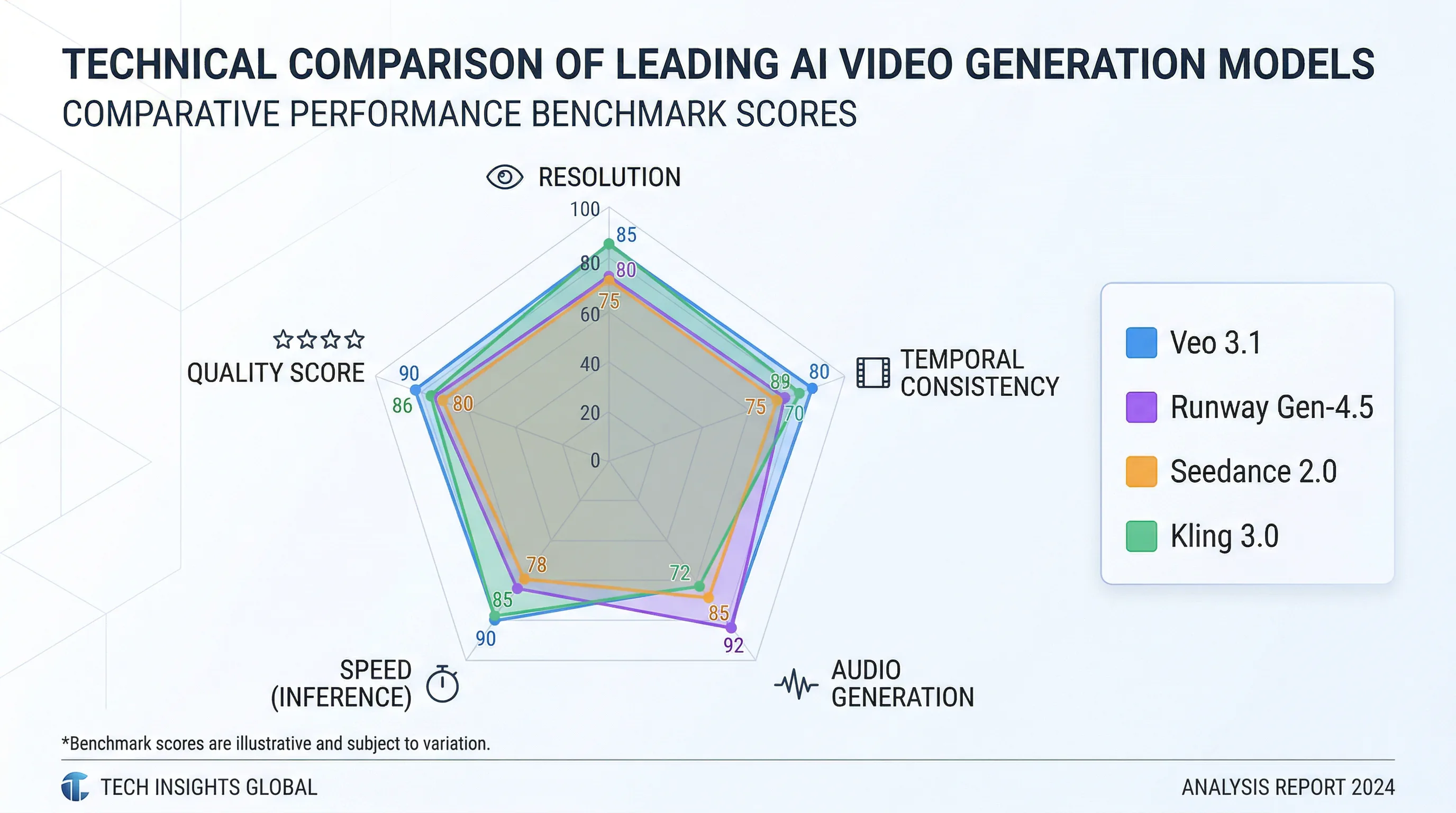

Benchmark Performance and Quality Metrics

Recent benchmark summaries place Runway Gen-4.5 near the top of the quality conversation, with Veo 3.1 close behind and Seedance 2.0 also performing strongly in composite rankings. Those leaderboards typically aggregate visual fidelity, motion smoothness, prompt alignment, and temporal consistency into a single score.

The raw leaderboard numbers only tell part of the story. In practice, Veo 3.1 stands out in a few specific areas:

- Strong cinematic color and lighting consistency

- Clean single-shot realism

- Native audio generation inside the same workflow

- Better-looking high-resolution output than many models that rely on upscale-heavy pipelines

Seedance 2.0, by contrast, currently leads in motion naturalness for many creators. Characters move with more weight, more believable timing, and more human body mechanics than most rivals. Runway remains especially strong for creative control and image-to-video workflows. Kling keeps improving in high-resolution motion and stylized output.

| Model | Resolution | Duration | Audio | Temporal Consistency | Best Use Case |

|---|---|---|---|---|---|

| Veo 3.1 | Native 4K | 4-8 sec | Native sync | Excellent | Cinematic, professional content |

| Runway Gen-4.5 | 1080p (4K upscale) | Variable | External | Very Good | Image-to-video, creative control |

| Seedance 2.0 | Up to 4K | 5-10 sec | External | Excellent | Motion quality, photorealism |

| Kling 3.0 | Ultra-HD | Variable | External | Good | Character animation, stylized content |

The Ecosystem Advantage

What gives Google a structural edge is not just model quality. It is ecosystem integration. Veo is positioned to live inside YouTube, Gemini, Workspace, Google Ads, and developer-facing APIs. That means Google does not have to win by turning Veo into a standalone consumer destination. It can win by making Veo useful exactly where creators and marketers already work.

Google has already integrated Veo into advertising workflows. Marketers can turn static assets into short video creatives without building an entirely separate production process. For developers, Veo 3.1 Lite is available through the Gemini API and Google AI Studio, which means the infrastructure layer is already in place for broader application-level video generation.

That distribution advantage matters. The AI video companies that survive long term are unlikely to be the ones with the flashiest single demo. They will be the ones with stable infrastructure, practical product embedding, and sustainable delivery economics.

Real-World Testing: What Creators Are Saying

User feedback from production environments already reveals both Veo's strengths and the gaps a future Veo 4 would need to close.

Strengths Confirmed in Practice

Creators consistently praise Veo 3.1 for single-shot realism and frame consistency. In tests involving dynamic subjects, moving cameras, and complex lighting, Veo often produces cleaner shot integrity than competing models. One recurring pattern in creator feedback is that Veo may not always be the most expressive model, but it is often the one that looks the most finished straight out of generation.

The built-in audio workflow also gets strong marks. Even when the sound is not final-mix quality, having synchronized draft audio immediately available speeds up ideation, review cycles, and rough-cut production dramatically. That is especially useful for concept development, ad testing, and narrative prototyping.

Limitations That Veo 4 Must Address

The short generation window remains the biggest complaint. If a story needs breathing room, creators still have to work around the 8-second ceiling. That adds stitching friction, continuity risk, and extra editorial work.

Character identity across multiple clips also remains imperfect. Veo 3.1 can maintain appearance reasonably well when given good references, but it still does not behave like a true persistent character system. For long-form storytelling, that limitation is still decisive.

How to Prepare for Veo 4

No official Veo 4 release date has been announced, but creators and developers can prepare now by building skills and workflows that will transfer cleanly when the next model arrives.

Master Prompt Engineering for Veo's Current Architecture

The most impressive AI video work is rarely a one-shot miracle. It is usually the result of structured prompting, careful direction, and a clear sense of how the model interprets camera language, lighting, pacing, and scene logic.

Using current Veo 3.1 workflows on Seedance AI is the fastest way to build that intuition. Test how the model handles motion cues, focal changes, lighting adjectives, and reference images. The patterns you learn now will likely transfer directly into any future Veo release.

Think in Scenes, Not Clips

The best AI video creators no longer think in isolated outputs. They think in sequences, coverage, continuity, and editorial flow. Even before Veo 4 arrives, that mental shift matters.

Plan shot lists. Build visual systems. Reuse camera language. Treat each generation as part of a larger scene rather than a standalone social clip. The creators who adapt that mindset early will benefit most when model memory and generation duration improve.

Diversify Your Toolset

One clear lesson from the current market is that no single model wins every category. A practical 2026 workflow might use:

- Veo for cinematic quality and native audio

- Seedance for motion quality and multi-model experimentation

- Runway for control-heavy image-to-video tasks

- Kling for stylized or animation-oriented output

Platforms like Seedance AI make that strategy practical by giving creators one place to compare models instead of committing to a single vendor workflow too early.

Monitor Official Channels for Access

If Veo 4 follows Google's current pattern, access will likely expand through a mix of preview programs, product integrations, and API rollouts rather than a single dramatic launch moment.

The best places to watch are:

- Google DeepMind announcements

- Google AI Studio and Gemini API updates

- YouTube and Google Ads product releases

- Flow and related Google creative tooling

The Broader Market Context: Why Veo 4 Matters

Veo 4 matters not just because it could be another strong model release, but because it may signal what the stable endgame for AI video actually looks like.

The Economics of AI Video

AI video is computationally expensive. The models that survive are the ones that combine strong output with infrastructure advantages and distribution that can support the cost profile. Google is unusually well positioned here because it controls the cloud stack, the hardware strategy, and multiple high-volume surfaces where video generation can become a feature rather than a standalone bet.

That infrastructure edge is difficult for smaller competitors to match. If Veo 4 improves meaningfully while staying embedded inside Google's product ecosystem, it becomes much harder to dislodge.

The Democratization Paradox

If high-quality 4K video, synchronized audio, and strong directorial control become available through text prompts and lightweight editing, technical execution becomes less scarce. That does not make creative work less valuable. It makes vision, taste, and storytelling more valuable.

This is the same pattern that played out in photography, design, and digital publishing. When execution becomes accessible, the premium shifts to the people who know what to say, what to show, and why it should matter.

The Integration Race

The next major winners in AI are unlikely to be single-purpose novelty apps. They will be companies that hide powerful models inside products people already use every day.

That is why Google matters here. A future Veo 4 integrated into YouTube creation tools, ad workflows, enterprise productivity, and developer APIs is strategically more powerful than a model that exists only as a standalone demo surface.

What Veo 4 Means for Different User Segments

Content Creators and YouTubers

For creators, longer clip duration and stronger audio would reduce the number of production steps needed for explainers, shorts, B-roll, and narrative inserts. If Veo becomes native to YouTube workflows, AI-generated sequences could move from novelty to normal creative infrastructure.

Marketing and Advertising Professionals

Marketers benefit most from speed and variation. The ability to turn static product assets into multiple testable video concepts quickly is already valuable. Longer shots, better continuity, and stronger audio would make AI-generated video far more viable for actual campaign production instead of only rough mockups.

Developers and Product Teams

API access is where a future Veo 4 could become especially meaningful. Product teams could generate product demos, educational explainers, localized video variants, or personalized assets directly inside apps. The Gemini API foundation already exists. A stronger model simply expands what becomes practical.

Filmmakers and Studios

Traditional production is not going away, but previsualization, storyboarding, testing, and certain kinds of generated footage are all moving toward AI-assisted workflows. Better character persistence and directorial control would make Veo far more relevant to those production environments.

Comparison Table: Veo 4 Expected Features vs. Current Market Leaders

| Feature | Veo 4 (Expected) | Veo 3.1 (Current) | Runway Gen-4.5 | Seedance 2.0 | Kling 3.0 |

|---|---|---|---|---|---|

| Max Duration | 15-30 sec | 4-8 sec | Variable | 5-10 sec | Variable |

| Resolution | Native 4K | Native 4K | 1080p (4K upscale) | Up to 4K | Ultra-HD |

| Native Audio | Spatial intelligence | Synchronized | External | External | External |

| Character Consistency | Persistent IDs | Reference-based | Good | Reference-based | Good |

| Camera Control | Directorial precision | Technical directives | High | Moderate | Moderate |

| Temporal Consistency | Extended scene memory | Excellent (8 sec) | Very Good | Excellent | Good |

| Generation Speed | Fast (predicted) | Fast | Moderate | Moderate | Fast |

| API Access | Gemini API | Gemini API | API available | Limited | API available |

| Ecosystem Integration | YouTube, Ads, Workspace | Ads, Workspace | Standalone | Standalone | Standalone |

| Best For | All-around professional | Cinematic content | Creative control | Motion quality | Animation |

Preparing Your Workflow: Practical Steps

1. Experiment with Current Veo Capabilities

Try current Veo 3.1 workflows and document what happens when you change prompts, references, aspect ratios, or motion language. That hands-on understanding matters more than abstract speculation.

2. Build a Prompt Library

Maintain reusable prompt structures for:

- Camera movement

- Lighting styles

- Character framing

- Product showcase shots

- Narrative transitions

- Atmosphere and sound cues

When Veo 4 eventually arrives, that library becomes a practical operating advantage.

3. Develop Multi-Model Workflows

Do not assume one model should do everything. Learn where Veo performs best relative to Seedance, Kling, and Runway, then route work accordingly. That is how the strongest creators are already working.

4. Invest in Post-Production Skills

Generation quality is rising, but editing, pacing, sound polish, and narrative construction still separate good work from forgettable work. The creators who win in AI video are not the people with the cleverest prompts alone. They are the ones who can turn raw generations into finished communication.

5. Watch Licensing and Rights Carefully

As AI-generated video becomes more commercially viable, rights, licensing, and content traceability become more important. Google's SynthID and similar watermarking approaches will likely matter more, not less, as adoption expands.

The Road Ahead: Predictions for 2026 and Beyond

Several trends now look increasingly likely:

Google will keep pushing Veo into products, not just previews. The most strategic path is deeper YouTube, Ads, and Workspace integration rather than a standalone-only consumer destination.

Multi-model platforms will keep gaining ground. Creators do not want vendor lock-in when model strengths keep changing. Unified access layers will remain valuable.

Raw model quality will converge. The difference between top-tier systems will narrow. Workflow design, integration, cost efficiency, and ecosystem advantage will matter more.

Narrative consistency becomes the next real frontier. Once short clips look consistently good, the defining challenge becomes longer-form coherence: recurring characters, stable worlds, and emotional continuity.

Audio realism becomes a bigger differentiator. Clean spatially believable sound can push a video from "good AI output" to something that feels production-ready.

Conclusion: Why Veo 4 Represents a Turning Point

Veo 4 matters because it points to the next phase of AI video generation: longer, cleaner, more controllable, and more deeply integrated into tools people already use. If Google can combine Veo's current strengths in cinematic quality and native audio with longer duration, persistent character memory, and stronger directorial control, it will move AI video closer to everyday production infrastructure.

For creators, marketers, and developers, the strategic move is not to wait passively for the next announcement. It is to start building the workflows now: test current models, compare outputs, organize prompt systems, and develop a production process that can absorb better tools as they arrive.

The future of video creation will not belong to the people who simply have access to the best model. It will belong to the people who know how to turn that access into clear creative decisions, fast iteration, and finished work that actually communicates something.

If you want to prepare now, Seedance AI gives you a practical way to compare Veo with other leading video models, refine prompts, and build a workflow that will be ready when Veo 4 arrives.